I am a second-year PhD student in Robotics Engineering at WPI, working in the Manipulation and Environmental Robotics Lab with Prof. Berk Calli.

Watching Yamaha's Motobot struggle against Valentino Rossi got me thinking about the gap between perceiving an environment and making the right decision in it - in conditions the system wasn't built for. That question has shaped most of my research.

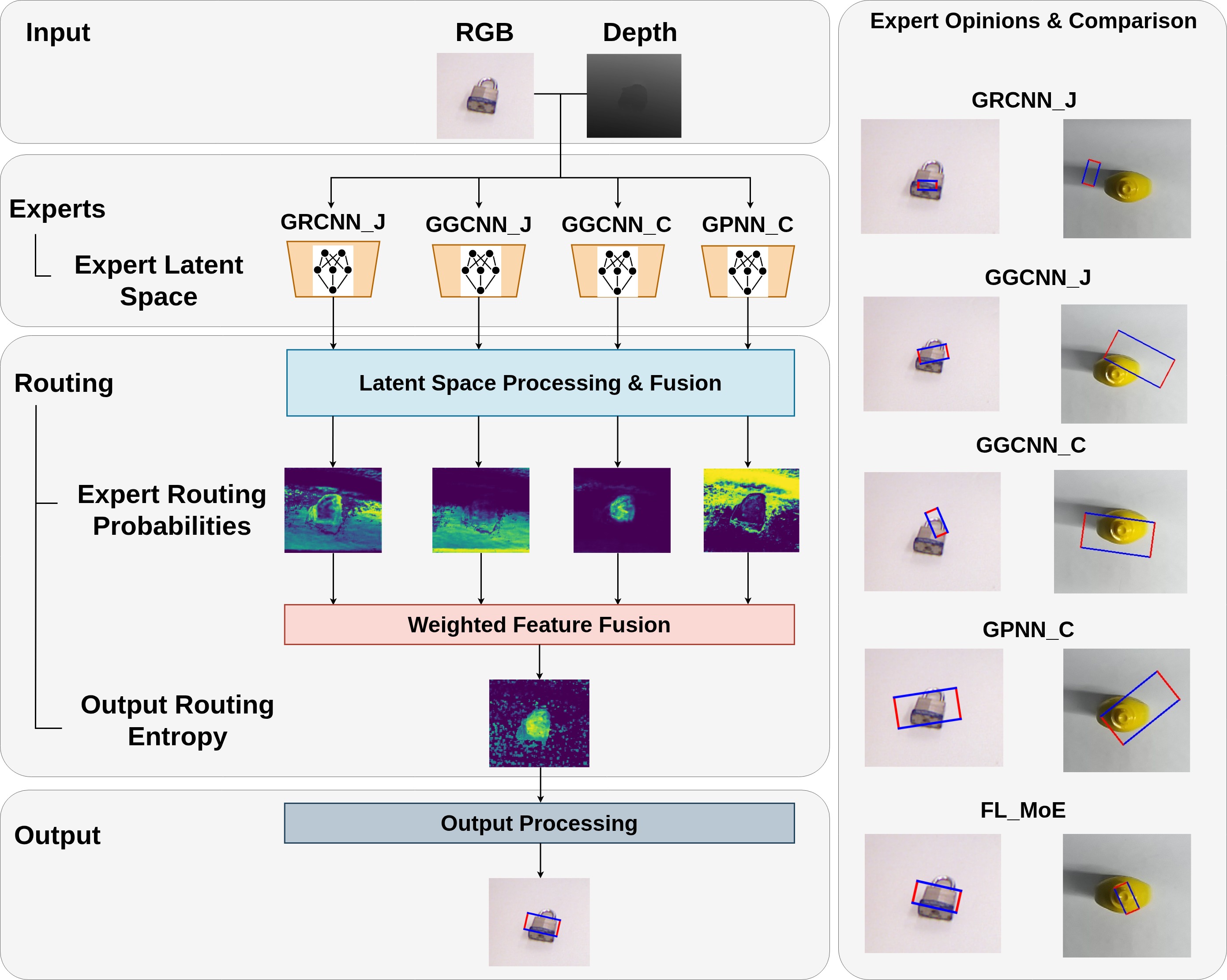

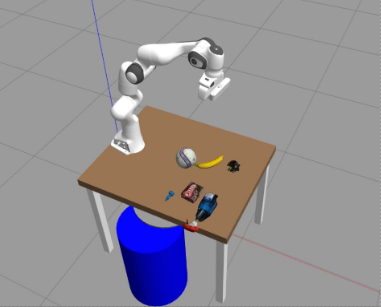

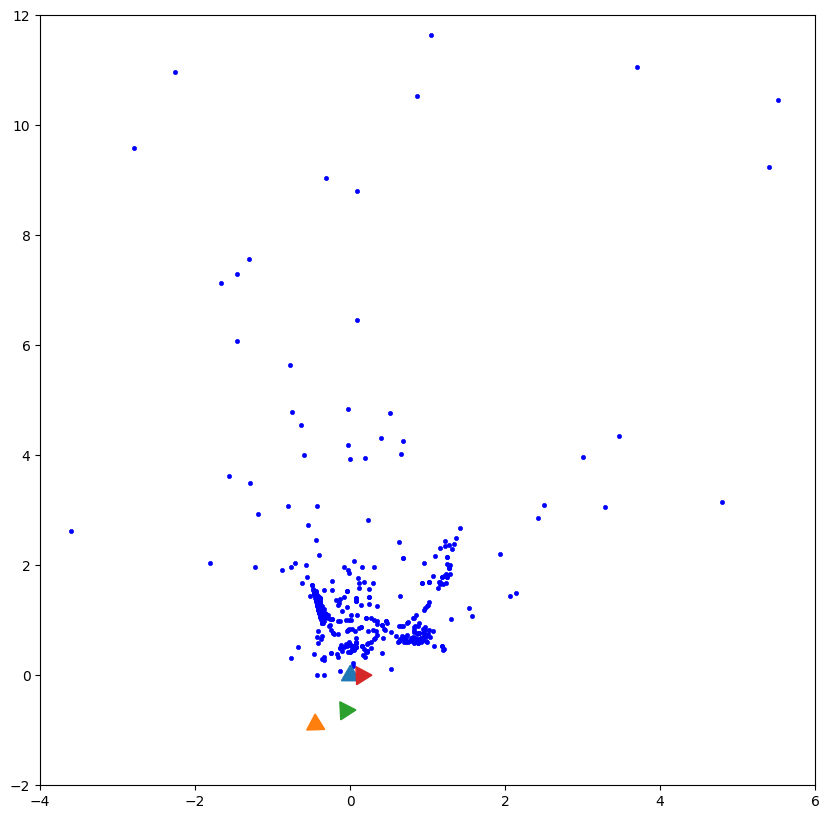

I came to robotics through Electronics Engineering in Pune, India, which means I think about systems from the hardware up. My work spans robot perception, deep learning for manipulation, and vision-language reasoning - all validated on real robots, not just in simulation.

Outside the lab I am a competitive swimmer and a die hard soccer fan (Glory Glory Man United!). The early-morning training discipline has been useful. Especially when a model training run fails at 3am.

I am looking for internship roles in robot perception, computer vision, deep learning, and physical AI - at teams building things that need to work when the situation changes.